Over a decade ago, I saw this talk by John Rauser. Only recently, though, did I come to realize how incredibly influential this talk has been on my career. Gosh what a great talk! You should watch it.

If you operate a complex system, like a SaaS app, you probably have a dashboard showing a few high-level metrics that summarize the system’s overall state. These metrics (“summary statistics”) are essential. They can reveal many kinds of gross changes (both gross “large scale” and gross “ick”) in the system’s state, over many different time scales. Very useful!

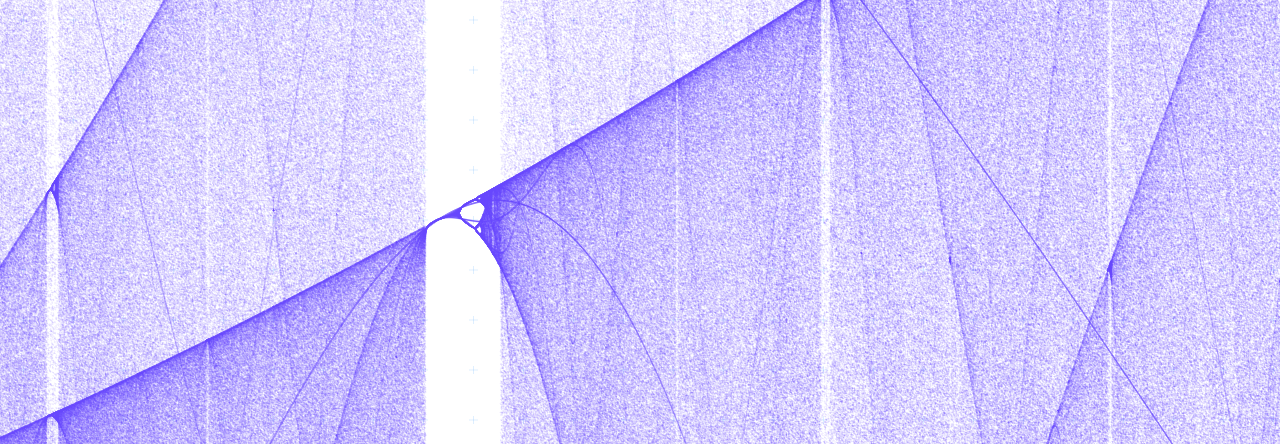

But don’t be misled. Summary statistics reveal certain patterns in the system’s behavior, but they are not identical to the system’s behavior. All summary statistics – yes, even distributions – hide information. They’re lossy. It’s easy to get lulled into the sense that, if an anomaly doesn’t show up in the summary statistics, it doesn’t matter. But a complex system’s behavior is not just curves on a plot. It’s a frothing, many-dimensional vector sum of instant-to-instant interactions.

When you investigate an anomaly in summary statistics, you’re faced with a small number of big facts. Average latency jumped by 20% at such-and-such time. Write IOPS doubled. API server queue depth started rising at some later time. Usually, you “zoom in” from there to find patterns that might explain these changes.

When you instead investigate a specific instance of anomalous behavior, you start with a large number of small facts. A request to such-and-such an endpoint with this-and-that parameter took however many seconds and crashed on line 99 of thing_doer.rb. None of these small facts tell you anything about the system’s overall behavior: this is just a single event among millions or billions or more. But, nevertheless: these small facts can be quite illuminating if you zoom out.

First of all, this probably isn’t the only time a crash like this has ever occurred. Maybe it’s happening multiple times a day. Maybe it happened twice as often this week as it did last week. Maybe it’s happening every time a specific customer makes a specific API request. Maybe that customer is fuming.

And second of all, the reason this event caught our eye in the first place was because it was anomalous. It had some extreme characteristic. Take, for example, a request that was served with very high latency. Perhaps, in the specific anomalous case before us, that extreme latency didn’t cause a problem. But how extreme could it get before it did cause a problem? If it took 20 seconds today, could it take 30 seconds next time? When it hits 30, it’ll time out and throw an error. Or, if multiple requests like this all arrived at the same time, could they exhaust some resource and interfere with other requests?

If the only anomalies you investigate are those that show up in summary statistics, then you’ll only find problems that have already gotten bad enough to move those needles. But if you dig into specific instances of anomalous behavior – “outliers” – then you can often find problems earlier, before they become crises.

One of the things John Rauser mentions is: “monitor percentiles deep in the tail”. And in my mind I keep asking: why not (also) look at the max? This is what he implies from time to time, but never quite say it out loud. Is looking at max a taboo, or is there a rationale that I’m missing?

Hmm! That’s a good question.

Personally, I look at maxima all the time. There are always surprising and enlightening things happening at maxima. OOMkills, deadlocks, network routing bugs… stuff that’ll really ruin your day someday unless you fix it first.

Maybe John was generously including maxima under the heading, “percentiles.”

BTW, here’s his previous talk he mentions at the beginning of this one: