EDIT 2023-01-16: Since I wrote this, I’ve gotten really into what I call descriptive engineering and the Maxwell’s Demon approach. I still don’t think it’s worthwhile to try to predict novel failures automatically based on telemetry, but predicting novel failures manually based on intuition and elbow grease is super useful.

I often meet with skepticism when I say that server monitoring systems should only page when a service stops doing its work. It’s one of the suggestions I made in my Smoke Alarms & Car Alarms talk at Monitorama this year. I don’t page on high CPU usage, or rapidly-growing RAM usage, or anything like that. Skeptics usually ask some variation on:

If you only alert on things that are already broken, won’t you miss opportunities to fix things before they break?

The answer is a clear and unapologetic yes! Sometimes that will happen.

It’s easy to be certain that a service is down: just check whether its work is still getting done. It’s even pretty easy to detect a performance degradation, as long as you have clearly defined what constitutes acceptable performance. But it’s orders of magnitude more difficult to reliably predict that a service will go down soon without human intervention.

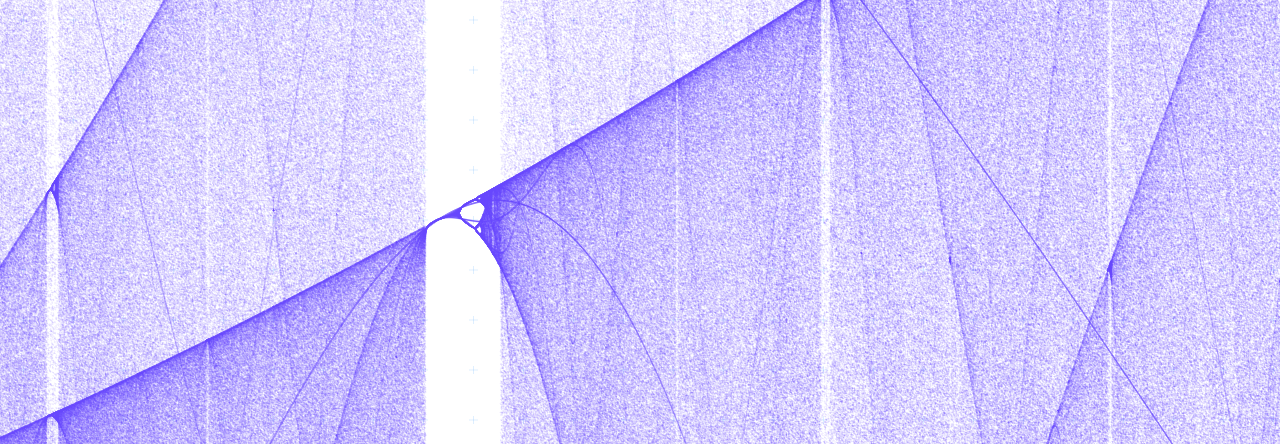

We like to operate our systems at the very edge of their capacity. This is true not only in tech, but in all sectors: from medicine to energy to transportation. And it makes sense: we bought a certain amount of capacity: why would we waste any? But a side effect of this insatiable lust for capacity is that it makes the line between working and not working extremely subtle. As Mark Burgess points out in his thought-provoking In Search of Certainty, this is a consequence of nonlinear dynamics (or “chaos theory“), and our systems are vulnerable to it as long as we operate them so close to an unstable region.

But we really really want to predict failures! It’s tempting to try and develop increasingly complex models of our nonlinear systems, aiming for perfect failure prediction. Unfortunately, since these systems are almost always operating under an unpredictable workload, we end up having to couple these models tightly to our implementation: number of threads, number of servers, network link speed, JVM heap size, and so on.

This is just like overfitting a regression in statistics: it may work incredibly well for the conditions that you sampled to build your model, but it will fail as soon as new conditions are introduced. In short, predictive models for nonlinear systems are fragile. So fragile that they’re not worth the effort to build.

Instead of trying to buck the unbuckable (which is a bucking waste of time), we should seek to capture every failure and let our system learn from it. We should make systems that are aware of their own performance and the status of their own monitors. That way we can build feedback loops and self-healing into them: a strategy that won’t crumble when the implementation or the workload takes a sharp left.

Pingback: SRE Weekly Issue #355 – SRE WEEKLY

Pingback: SRE Weekly Issue #355 – FDE

This makes sense to me, but I’m trying to understand why it doesn’t seem like it would make sense for security. Is it just that the consequences of downtime are (usually) far less pronounced than the consequences of a breach?

Hah! Good point. You wouldn’t want to wait to notify Security of suspicious activity until after an attacker had brought your site down.

But I’m making a distinction here between notifying and paging. There are many things I might want to get *notified* about because they’re worthy of my attention, but not *paged* about. Both security and ops teams should spend time investigating surprising, novel phenomena in production – they just don’t need to be paged about them.

Really, though, I would argue that this page-only-when-down principle works for security too. It’s just that the systems security is responsible for are generally safeguards rather than the actual production system. For example, if a TLS key is compromised, then that key is no longer doing its job. The fence is no longer electrified, as it were, and security needs to respond immediately.

Ah perfect that notify vs page nuance helps better contextualize things for me. Thank you very much!