“Stop Expecting That.”

When you use a program a lot, you start to notice its quirks. If you’re a programmer yourself, you start to develop theories about why the quirks exist, and how you’d fix them if you had the time or the source. If you’re not a programmer, you just shrug and work around the quirks.

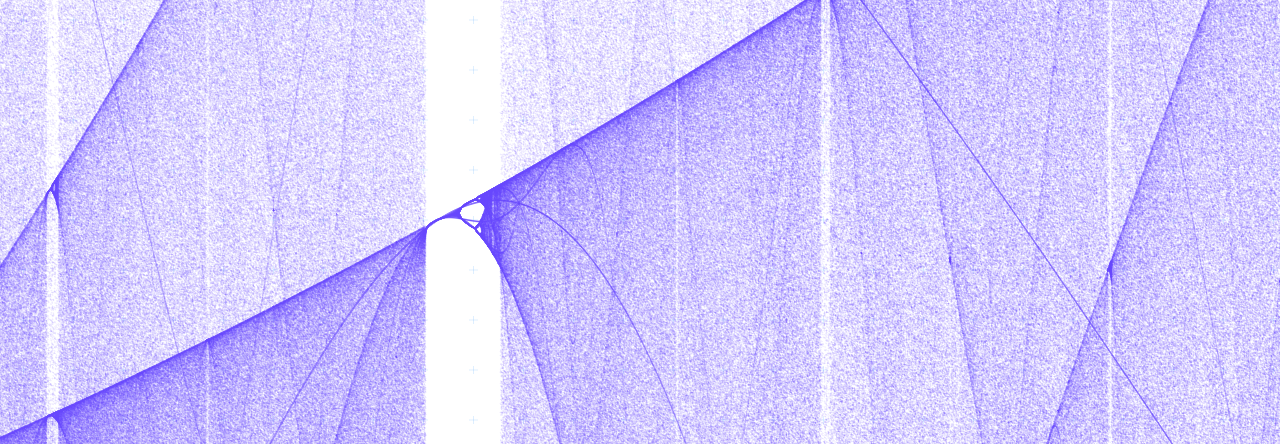

I review about 400 virtual flash cards a day in studying for Jeopardy, so I’ve really started to pick up on the quirks of the flash card software I use. One quirk in particular really bothered me: the documentation, along with the first-tier support team, claims that when cards come up for review they will be presented in a random order. But I’ve noticed that, far from being truly random, the program presents cards in bunches of 50: old cards in the first bunch, then newer and newer bunches of cards. By the time I get to my last 50 cards of the day, they’re all less than 2 weeks old.

So I submitted a bug report, complete with scatterplot demonstrating this clear pattern. I explained “I would expect the cards to be shuffled evenly, but that doesn’t appear to be the case.” And do you know what the lead developer of the project told me?

“Stop expecting that.”

Not in so many words, of course, but there you have it. The problem was not in the software; it was in my expectations.

It’s a common reaction among software developers. We think “Look, that’s just the way it works. I understand why it works that way and I can explain it to you. So, you see, it’s not really a bug.” And as frustrating as this attitude is, I can’t say I’m immune to it myself. I’m in ops, so the users of my software are usually highly technical. I can easily make them understand why a weird thing keeps happening, and they can figure out how to work around the quirk. But the “stop expecting that” attitude is wrong, and it hurts everyone’s productivity, and it makes software worse. We have to consciously reject it.

Quirks are bugs.

A bug is when the program doesn’t work the way the programmer expects.

A quirk is when the program doesn’t work the way the user expects.

What’s the difference, really? Especially in the open-source world, where every user is a potential developer, and all your developers are users?

Quirks and bugs can both be worked around, but a workaround requires the user to learn arbitrary procedures which aren’t directly useful, and which aren’t connected in any meaningful way to his mental model of the software.

Quirks and bugs both make software less useful. They make users less productive. Neglected, they necessitate a sort of oral tradition — not dissimilar from superstition — in which users pass the proper set of incantations from generation to generation. Software shouldn’t be like that.

Quirks and bugs both drive users away.

Why should we treat them differently?

Stop “Stop Expecting That”ing

I’ve made some resolutions that I hope will gradually erase the distinction in my mind between quirks and bugs.

When I hear that a user encountered an unexpected condition in my software, I will ask myself how they developed their incorrect expectation. As they’ve used the program, has it guided them toward a flawed understanding? Or have I just exposed some internal detail that should be covered up?

If I find myself explaining to a user how my software works under the hood, I will redirect my spiel toward a search for ways to abstract away those implementation details instead of requiring the user to understand them.

If users are frequently confused about a particular feature, I’ll take a step back and examine the differences between my mental model of the software and the users’ mental model of it. I’ll then adjust one or both in order to bring them into congruence.

Anything that makes me a stronger force multiplier is worth doing.

Great post. As a release engineer for years whose job it is to enforce good process, I get a variation on that attitude. That practiced eye roll that says “You are making me do scut work and I do NOT appreciate it”.

It’s all about discipline. Correctness is important, repeatability is important, and accurately communicating with your users so they have reasonable expectations of your software is important too.

If that developer had simple advertised “shuffling is pseudo random based on stacks” you wouldn’t have had an issue, right? But it’s flashier and sells more software to say “shuffling is truly random” so that’s what they do.

I think that attitude is very similar. I mean, full disclosure: I do not appreciate being made to do scut work either. But at a small company, you don’t (and shouldn’t) have many scuts around, so you just have to get it done.

If that developer had advertised “shuffling is pseudo-random based on stacks”, I think I still would’ve had an issue. Even the first-tier support guy told me “It should be random — are you finding that it’s not?” Only when the lead developer stepped in did I find out that, as someone with more than 50 cards a day, I was an edge case.